Run Time Transparency

Overview

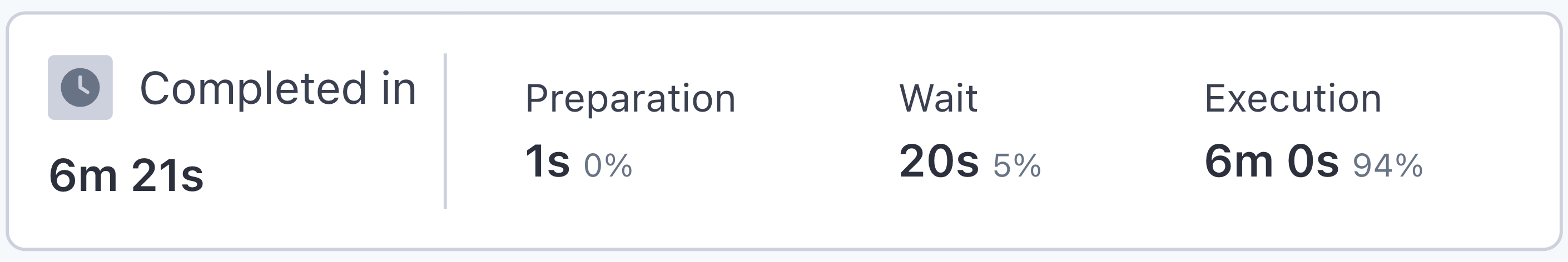

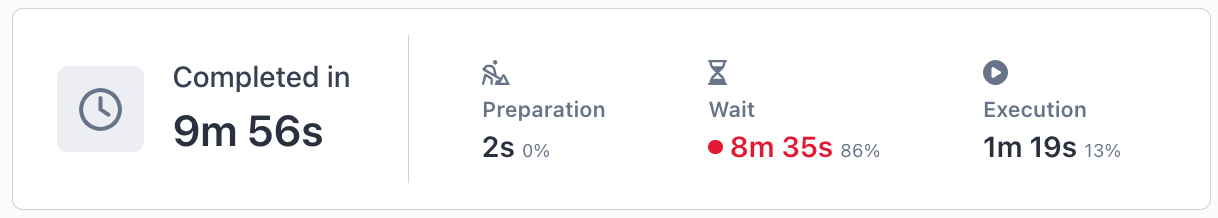

In the Summary section of a test run’s results page, you can find the total run time for the test broken down into three categories: preparation, wait, and execution. When appropriate, the system will provide recommendations to improve wait times for faster results.

How it works

On the test result page, the ‘Completed in’ box shows time spent in each phase of the run, along with actionable recommendations for reducing run time.

- Preparation indicates the time spent setting up the testing infrastructure and running your environment webhook, if applicable.

- Wait is the time the automation engine spent waiting for testing resources to become available. This can be affected by:

- Waiting for previous tests to finish because of concurrency limitations your team has set.

- Waiting for reusable dynamic data to become available.

- Waiting for a virtual machine to become available.

- Execution displays the time it took to execute all of the steps in your test(s).

Note: When running a run group, the execution time will reflect the time it took for the longest test to complete.

When wait time is in red

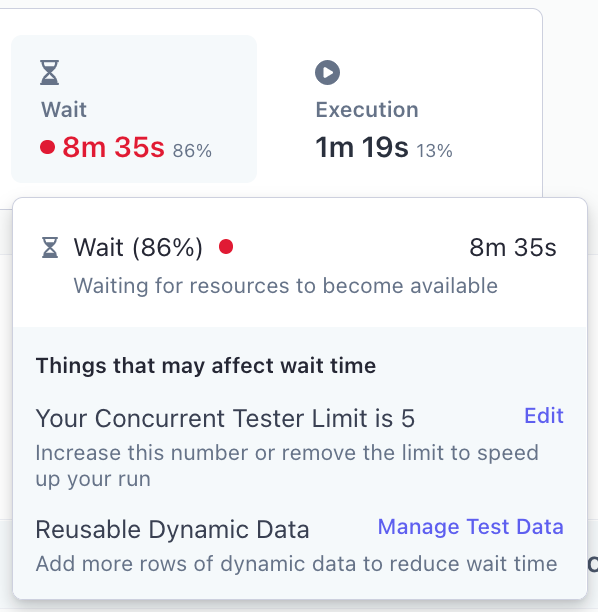

The preparation and wait time will display in red if there are indications that phase took longer than expected. Whenever possible, Rainforest provides recommendations to reduce wait time. Hover your cursor over the Wait section of the summary to see any recommendations.

Common recommendations are to add more rows of dynamic data or to adjust concurrency limits. If there are no recommendations provided, a relatively long wait is likely a result of waiting for a virtual machine to become available due to a high volume of testing. You can always reach out to [email protected] if you have concerns with long wait times.

Longer than expected wait time

Recommendations for reducing wait time for this run

FAQs

**Why would using dynamic data cause my runs to wait longer? **When using dynamic data, each row of data in the uploaded CSV file is assigned to its own test. If there are not enough rows available, the full test run will fail and you’ll receive an error message: “You ran out of step variable "[variable name]!”. If the data is marked as reusable, our system operates on a ‘one in, one out’ policy that will increase the wait for data to become available.

**Why would using concurrency limits cause my runs to wait longer? **By setting a concurrency limit, you are restricting the number of tests in your run group that can run at the same time. This can cause significant waits for run groups with a lot of tests. For more information, see Managing the Concurrency Limit .

If you have any questions, reach out to us at [email protected].

Updated 3 months ago