Test Retries

Learn how the Automation Service helps you address test flakiness.

Overview

Flakiness is a term used to describe sporadically failing tests. These tests don’t produce the same result each time they run, despite making no changes to the test or underlying code. Common causes include:

- Network issues

- Concurrency or load issues

- Test environment sluggishness

- Test order dependency

- Nondeterministic behaviors in the application

- Issues with the test itself

- Intermittent bugs

Though some level of flakiness occurs in any test automation, unreliable results can hinder team productivity and undermine confidence in testing accuracy. For this reason, it’s essential to develop a strategy to mitigate flakiness and isolate and handle flaky tests.

Rainforest can retry failed tests executed by our Automation Service to help reduce flaky results and unwanted continuous integration build failures. In doing so, your team saves valuable time and resources.

How It Works

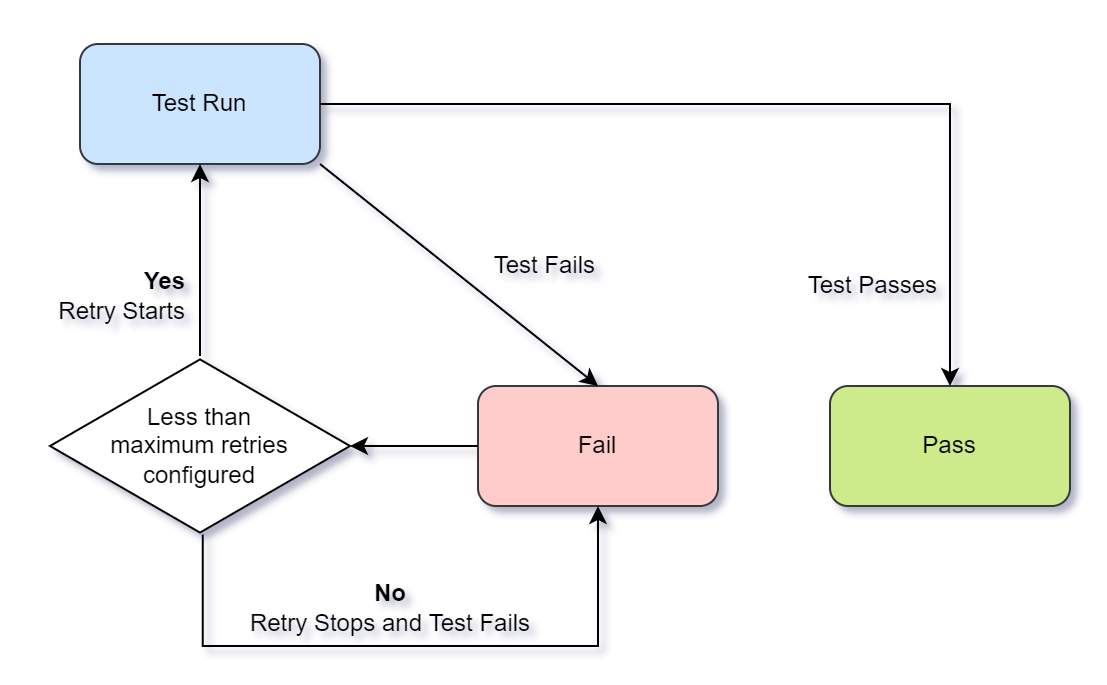

When you configure Test Retries, Rainforest automatically retries your failed test up to n times until a passed result is produced. For example, after specifying 2 retry attempts, Rainforest retries a failed test up to 2 additional times (for a total of 3 attempts) until a passed result is produced or until all attempts fail. All tests that didn’t pass are rerun. This includes failed tests, tests with no results, and errored tests.

Assuming we have configured Test Retries with 2 retry attempts, here is how a test might run:

- A test runs for the first time. If the test passes, the result is a Pass, and no retry attempts occur.

- If the test fails, Rainforest immediately attempts to run the test a second time.

- If the test passes on the second attempt, the result is a Pass, and no further retries occur.

- If the test fails on the second attempt, Rainforest attempts to run the test a third and final time.

- If the test passes on the third attempt, the result is a Pass.

- If the test fails on the third attempt, the result is a Fail.

How Test Retries works.

The goal is to reduce unwanted noise in your test results while providing useful information to help you investigate and address sources of flakiness. For this reason, we flag flaky test results and provide debugging tools.

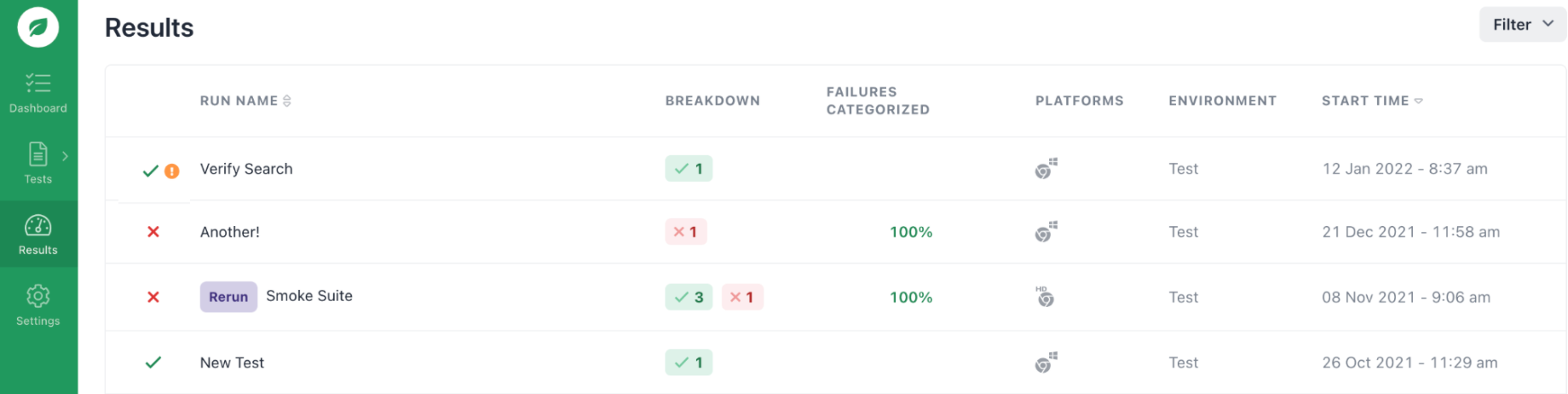

Runs containing at least one inconsistent test result (a failure passed when retried) are flagged with an exclamation icon.

The Run Results page.

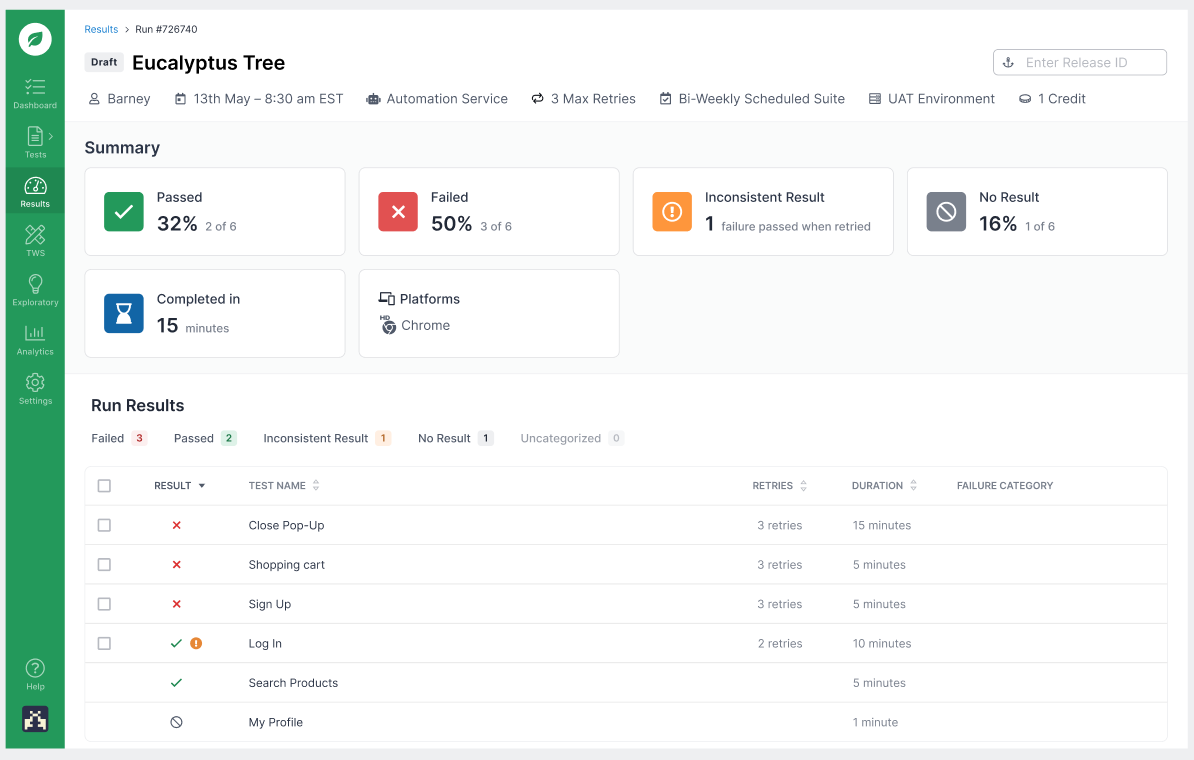

On the Run Summary page, tests that produced a flaky result are flagged with an exclamation icon, and the number of retry attempts is noted.

The Run Summary page.

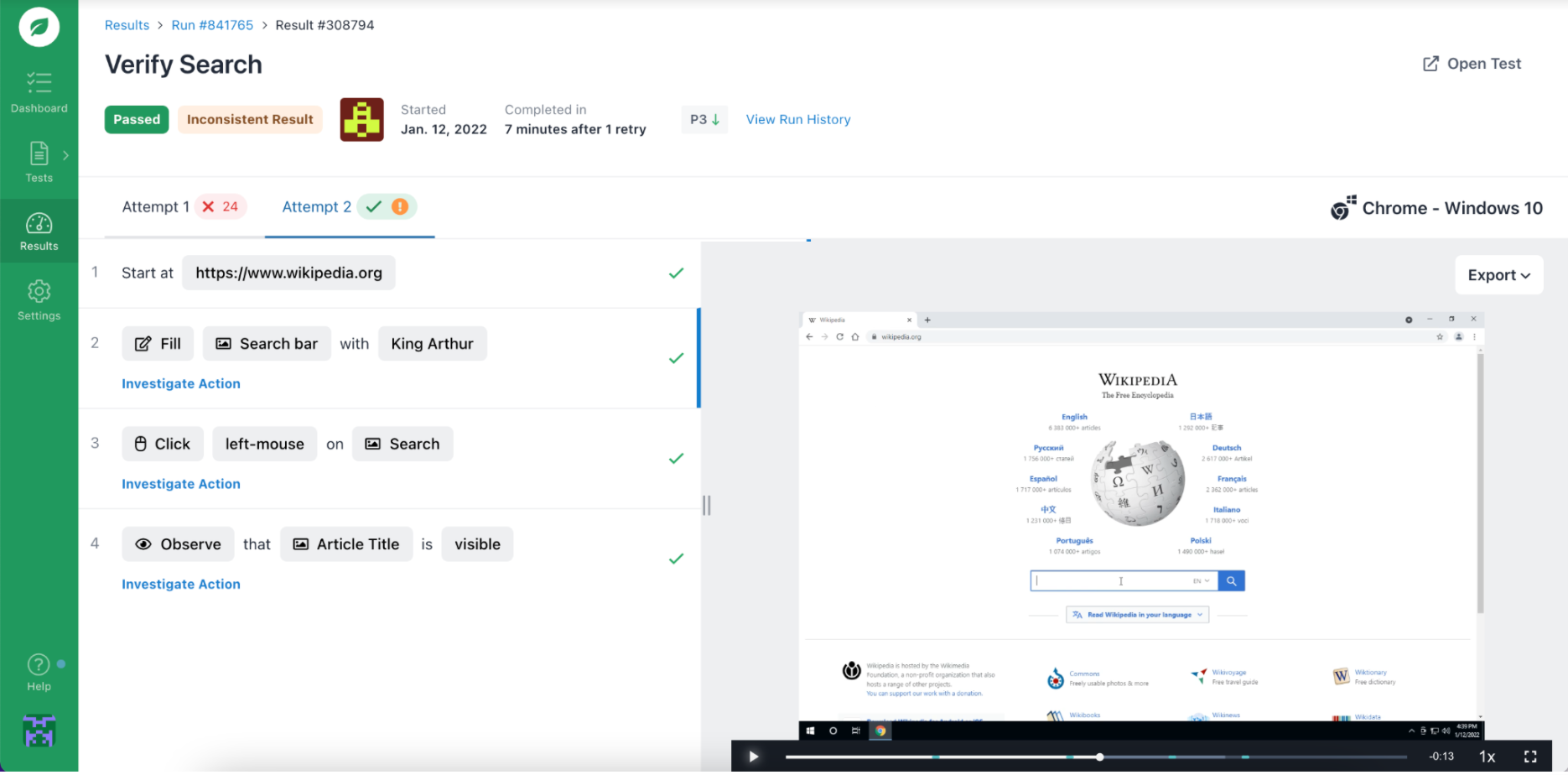

The Test Results page displays the result of each retry attempt, along with the reproduction video. With this information, you can investigate and debug any failed retry attempt, even when the ultimate result is a passed test.

The Test Results page.

Configuring Test Retries

Test Retries is configured as a global setting, though you have the option to override this setting for any test run.

Global Configuration

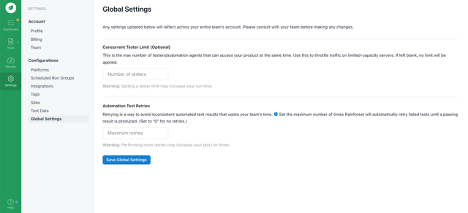

You configure a maximum number of retries on the Global Settings page. With a setting > 0, any Automation Service test that fails is retried up to the maximum number of times or until a passed result is produced.

If your account was created after January 12, 2022, your global setting is automatically configured for 1 maximum retry attempt, though you can modify it.

Note: The maximum number you can configure is 3, for a total of 4 attempts.

The Global Settings page.

Custom Configurations

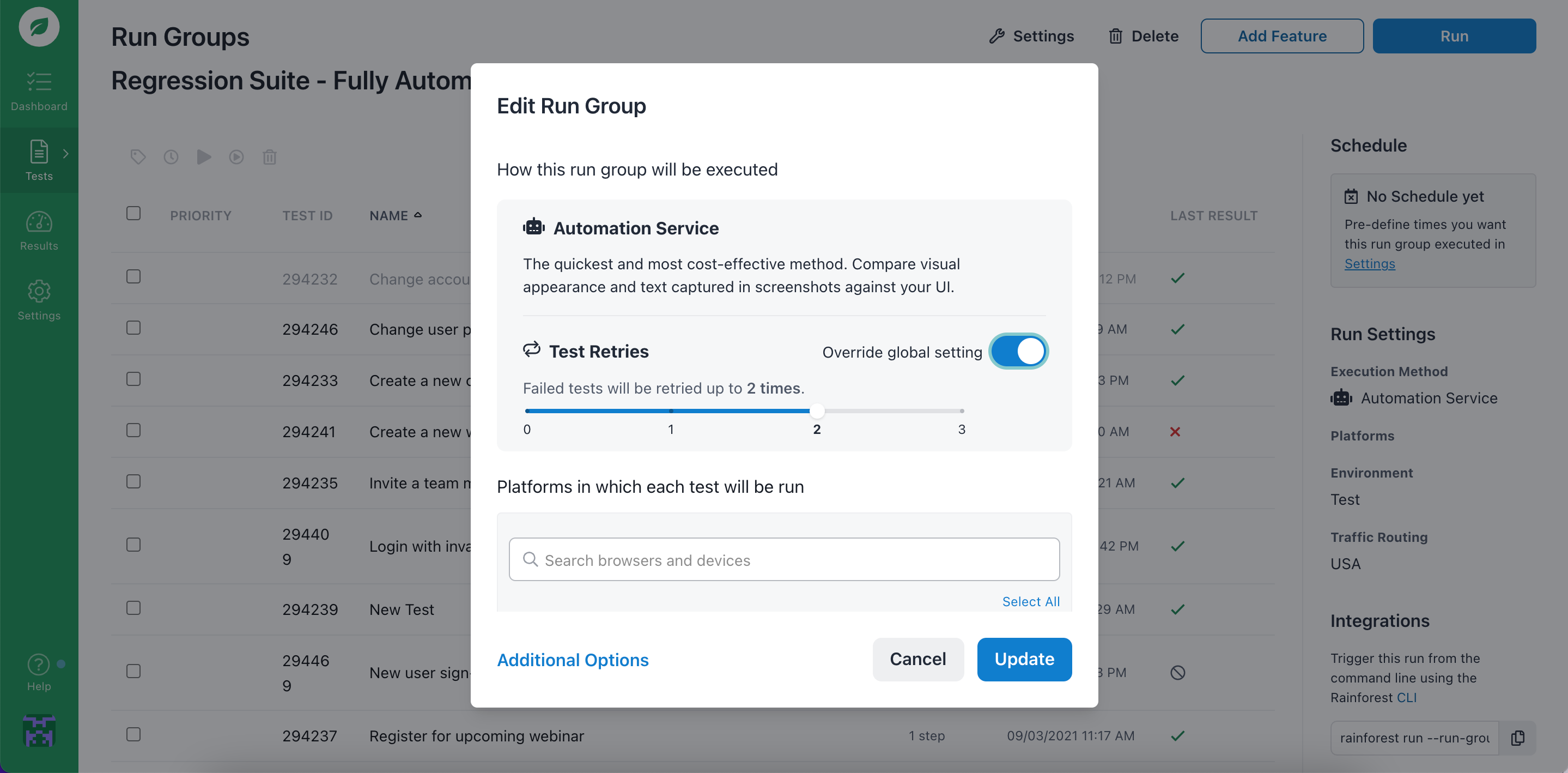

You can override the global setting when you run tests or create a run group. Doing so is useful for situations where you might want to apply a different number of retries or disable retries altogether.

When running tests or creating run groups, “Use Test Retries global setting” defaults to on. This means that your run inherits the test value configured in Global Settings, which could be 0, 1, 2, or 3. You have the option to toggle this setting to off and apply your own value.

The New Run modal.

Running Tests from the CLI

You can use the --automation-max-retries flag in the CLI to override the setting for a specific run. If you omit the flag, the default from your account or run group is used.

FAQ

Are retried attempts counted in my billing?

Yes, you are charged for test retry attempts unless you’re participating in a free trial of Rainforest.

When considering cost, remember that only failed tests are retried, and only until a passing result is produced (or all attempts fail). If your test suite is well maintained, failures should be uncommon. For this reason, the cost impact of Test Retries should be minimal.

How do I know if Test Retries is a good fit for me?

It depends. Some level of results inconsistency is unavoidable with any test automation. Automatically rerunning failed tests might be a reasonable proactive measure. However, it depends on the extent to which unreliable results are a problem.

For example, suppose you have an unreliable testing environment that behaves inconsistently or is prone to intermittent issues. In that case, Test Retries can help prevent unwanted noise. However, if your tests aren’t prone to inconsistent results, rerunning failed tests might not be necessary.

**What should I do with the flaky test results (a failure that passes when retried)? **

In general, we recommend you investigate and resolve sources of test flakiness. Though Test Retries can prevent flaky results from failing your runs and blocking your build pipeline, allowing flakiness to persist is unadvisable. Flaky results slow down your test runs due to time spent on retries and hint at more serious issues such as intermittent bugs, problems with your test environment, or data management practices.

You might not need to investigate flaky test results with the same urgency that you’d address failures blocking your continuous integration pipeline. Nevertheless, it’s still advisable to investigate and resolve any flakiness.

If I’ve configured Rainforest webhooks, how does Test Retries work with them?

Depending on what type of webhook you’ve configured, it continues to work normally, either running at the start or finish of your test run.

How does Test Data work with Test Retries?

Test Data normally works with retry attempts. When you leverage Built-In Data such as random email addresses and inboxes, each retry attempt is allocated a different variable value. For Dynamic Data such as login credentials, each retry attempt is given a different variable value. If your uploaded CSV file contains fewer rows of data than required, the run fails. See Dynamic Data for information on calculating the number of rows required for a test run.

Is there a way to configure unique retry settings for individual tests?

If you only want to retry a few tests, you should configure the *Maximum retries” setting on the Global Settings page to 0. For tests you want to retry, configure “Use Test Retries global setting” when running your tests. See Test Retries for more information.

If you have any questions, reach out to us at [email protected].

Updated 3 months ago